ARC-AGI-3 offers $2M to any AI that matches untrained humans, yet every frontier model scores below 1%

ARC-AGI-3 challenges AI with new benchmark

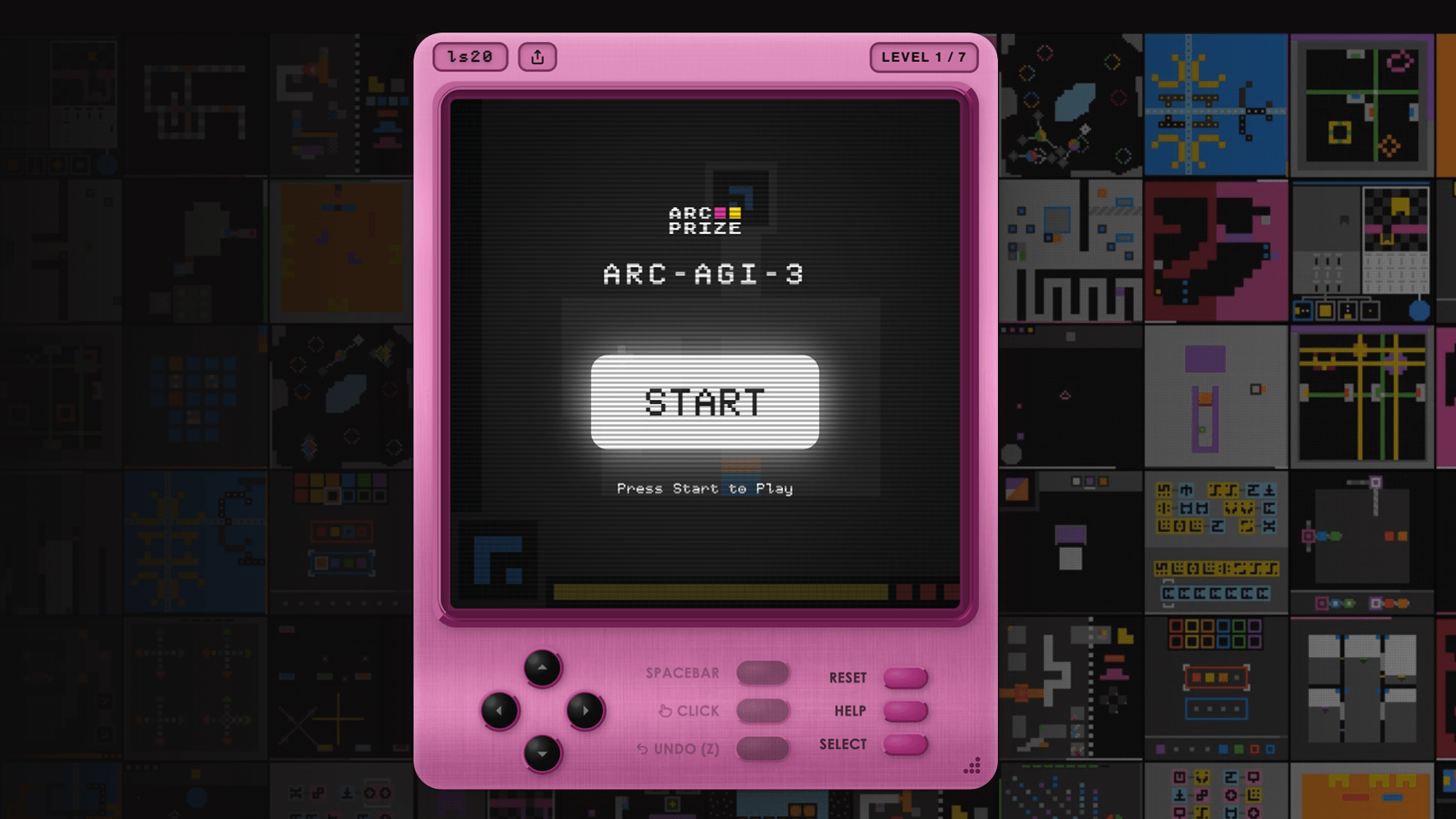

The ARC Prize Foundation has introduced the ARC-AGI-3 benchmark, which challenges AI systems in interactive game environments that untrained humans can navigate easily. Despite the $2 million prize for any AI that can match human performance, all tested frontier models, including Gemini 3.1 Pro Preview and GPT 5.4, scored below 1 percent, highlighting the significant gap in adaptability between AI and human intelligence.

Key Takeaways

- 1.

All tested frontier models scored below 1 percent on the new ARC-AGI-3 benchmark.

- 2.

The benchmark requires AI to explore and solve tasks without prior instructions.

- 3.

The ARC Prize Foundation is offering $2 million for AI that meets the benchmark.

Get your personalized feed

Trace groups the biggest stories, videos, and discussions into one feed so you can stay current without scanning ten tabs.

Try Trace free