AI models fail at robot control without human-designed building blocks but agentic scaffolding closes the gap

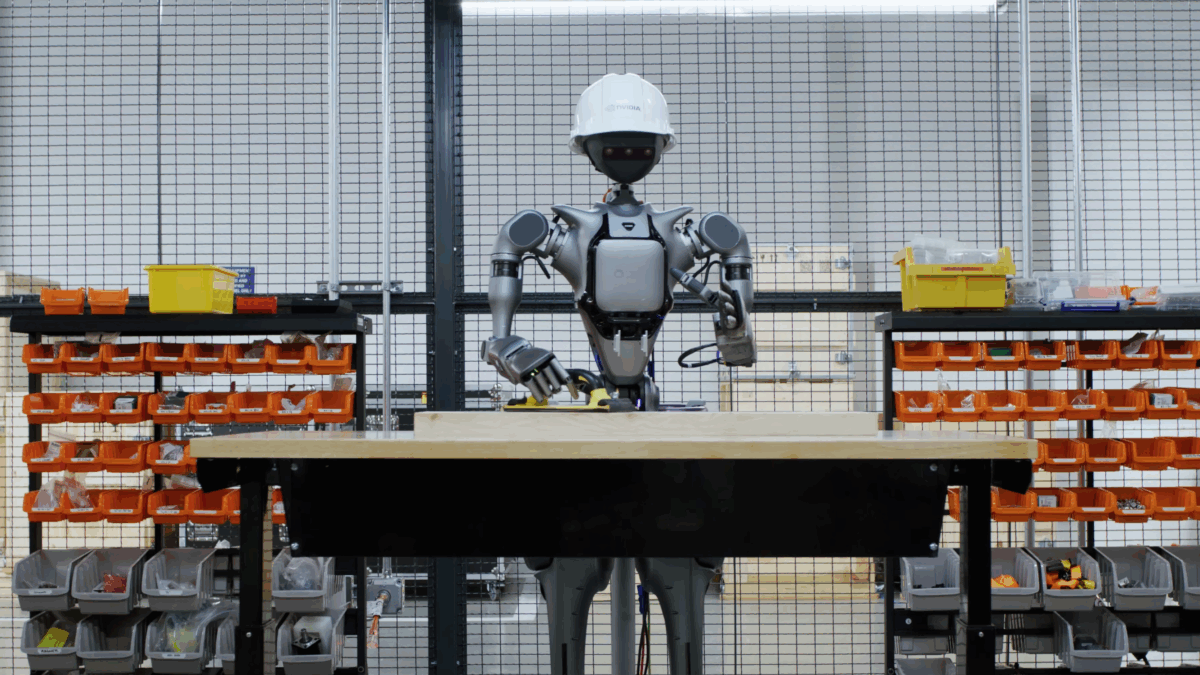

Nvidia and partners unveil new AI framework for robot control

A collaborative effort by Nvidia, UC Berkeley, and Stanford has revealed that AI models struggle to control robots effectively without human-designed building blocks. Their new framework, CaP-X, systematically tested twelve leading AI models, finding that none could achieve the reliability of human-written control code. However, the introduction of targeted techniques, such as reinforcement learning and a Visual Differencing Module, allowed their training-free system, CaP-Agent0, to perform comparably to human programmers in several tasks.

Key Takeaways

- 1.

None of the twelve AI models tested matched the reliability of human-written code.

- 2.

The new CaP-Agent0 system can outperform human-written code on four of seven tasks.

- 3.

Reinforcement learning improved a Qwen2.5-Coder-7B model's success rate from 4% to 76% in real-world tests.

Get your personalized feed

Trace groups the biggest stories, videos, and discussions into one feed so you can stay current without scanning ten tabs.

Try Trace free