Qwen3.5-Omni learned to write code from spoken instructions and video without anyone training it to

Alibaba's Qwen3.5-Omni learns coding from audio-visual input

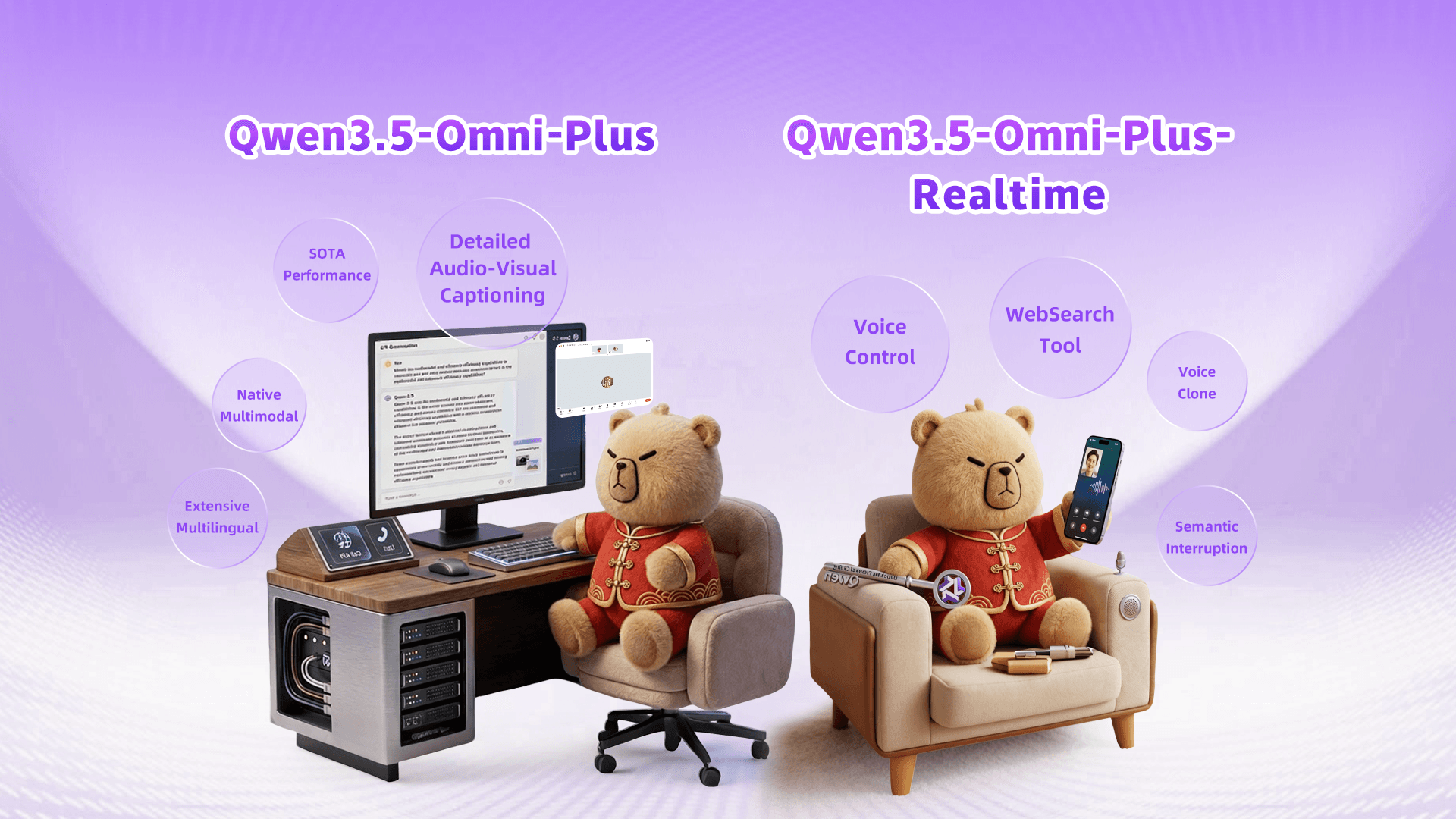

Alibaba has introduced Qwen3.5-Omni, a cutting-edge omnimodal AI model capable of processing text, images, audio, and video. This model not only excels in audio tasks, outperforming Google's Gemini 3.1 Pro, but also demonstrates an unexpected ability to write code based on spoken instructions and video content, a feature that emerged during its training. With significant improvements in language support and processing capabilities, Qwen3.5-Omni marks a notable advancement in AI technology.

Key Takeaways

- 1.

Qwen3.5-Omni supports 74 languages for speech recognition, a significant increase from 11.

- 2.

The model can process over 10 hours of audio and 400 seconds of video.

- 3.

Qwen3.5-Omni-Plus sets a new state of the art on 215 audio benchmarks.

Get your personalized feed

Trace groups the biggest stories, videos, and discussions into one feed so you can stay current without scanning ten tabs.

Try Trace free